The AI Morning Read March 18, 2026 - The AI That Understands Hardware: Inside InCoder-32B

カートのアイテムが多すぎます

カートに追加できませんでした。

ウィッシュリストに追加できませんでした。

ほしい物リストの削除に失敗しました。

ポッドキャストのフォローに失敗しました

ポッドキャストのフォロー解除に失敗しました

-

ナレーター:

-

著者:

概要

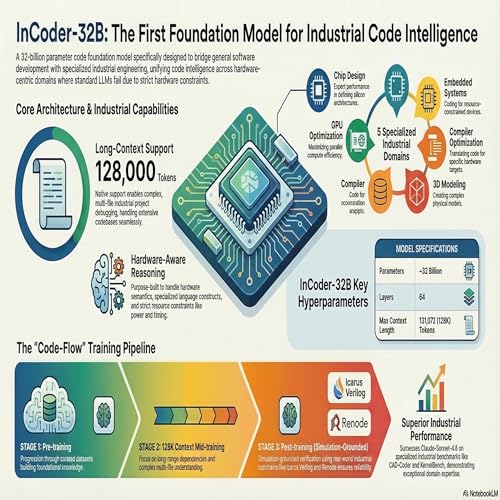

n today's podcast we deep dive into InCoder-32B, the first 32-billion-parameter code foundation model purpose-built to tackle the unique and rigorous demands of industrial programming. Unlike general-purpose code models that often degrade when faced with hardware semantics, this unified model specializes in five critical domains: chip design, GPU kernel optimization, embedded systems, compiler optimization, and 3D modeling. Its remarkable capabilities are the result of a rigorous three-stage "Code-Flow" training pipeline that includes pre-training on curated industrial data, progressive context extension up to 128,000 tokens, and execution-grounded post-training. To ensure the generated code respects strict hardware constraints and physical realities, the model was fine-tuned using simulated production environments where the code is actually executed and verified. As a result, InCoder-32B establishes strong new open-source baselines across these specialized domains, even outperforming proprietary models like Claude-Sonnet-4.6 on complex tasks such as GPU optimization and CAD geometric modeling.